You’re a marketing lead or founder at an SA service business. You’re spending somewhere between R15,000 and R100,000 a month on paid ads. You’ve been hearing about AI agency this, AI agency that, and you’re trying to work out whether the next sales call you sit through is a real operator or a consultancy with good slides.

This post is the AI agency vetting guide. Five evaluation steps for choosing an AI agency, a 60-day pilot framework, the exact questions to ask any AI agency sales team, the red flags to walk away from, and the contract clauses that protect you when things go wrong. No hype, no magic-AI narratives, and zero patience for handwaving. If you’re about to evaluate an AI agency for your SA service business, start here.

Why “AI agency” is suddenly everyone’s conversation

Before you start evaluating — set one primary KPI

Before you sit through a single sales call, do this internally: write down one primary KPI and a numeric target.

Not three KPIs. Not a balanced scorecard. One number. Pinned to the wall.

The options for an SA service business running paid ads:

- Cost-per-qualified-lead (CPQL) — the lead has to meet a defined qualification threshold (budget, timeline, fit), not just submit a form

- LTV:CAC — lifetime value of a customer divided by the cost to acquire them; useful if you have a mature CRM and known retention data

- Incremental revenue — revenue attributable to the ads that wouldn’t have happened otherwise; the hardest to measure but the most honest number

- Return on ad spend (ROAS) — gross or net, calculated consistently; useful but easily gamed by retargeting-heavy campaigns

Whichever you pick, attach a number you’d be happy with and a timeline to hit it. Example: “CPQL under R1,200 within 60 days, with at least 25% of leads converting to discovery calls.”

Without that number, no AI agency can tell you whether they’re winning or losing. And you can’t evaluate a pilot if you don’t know what “success” looks like.

Also pull your last 90 days of channel-level performance as your day-zero baseline before the agency’s pilot starts. This is the comparison set the incrementality test will be run against. No baseline, no incrementality claim.

Evaluation 1 — pricing model and incentive alignment

Pricing is where most AI agency evaluations go wrong — not because the numbers are unfair but because the structure creates the wrong incentives.

The four pricing models you’ll see from an AI agency:

- Pure retainer + ad spend markup. The agency is paid a fixed monthly fee plus a percentage of your ad spend, regardless of what the campaigns produce. This is the traditional agency model. It pays agencies to keep the retainer alive, not to produce results. Avoid for a first engagement.

- CPA or ROAS-based fees. The agency is paid per qualified lead, per sale, or per unit of ROAS. Incentives are directionally aligned. Watch the definitions carefully — “qualified lead” can be defined down to meaninglessness.

- Retainer plus performance bonus. A small retainer covers fixed operational overhead, plus a performance share tied to incremental revenue or a KPI threshold. This hybrid is often the most realistic for both sides because it covers the agency’s setup work while keeping upside aligned with client outcomes.

- Earn-before-charges / performance-only. The agency absorbs operational cost during a proof sprint and only charges once measurable growth is produced. This is the model PRIXGIG operates on and it shifts almost all of the early-stage risk onto the agency. Incentives are maximally aligned.

Which one is right for you depends on your risk tolerance and margin structure. For a first engagement, in our experience working with SA service businesses, you should push hard toward a hybrid or performance-weighted structure. A pure retainer on month one with no performance clauses is a signal the agency isn’t confident they can deliver.

Questions to ask every AI agency about pricing:

- Show me a worked example of how your fees scale with performance, including the edge cases where minimums or floors kick in

- What happens to the fee if the primary KPI isn’t hit by day 60?

- Are there clawbacks for invalid conversions or refunded sales?

- What is the minimum engagement term, and what are the early-termination triggers in both directions?

If the answers are vague or the agency gets nervous when asked to show the maths, that’s your answer.

Evaluation 2 — technical stack and data access

An AI agency lives or dies on data infrastructure. If they can’t connect to your platforms, get clean conversion data, and push optimisation signals back into the ad platforms, they cannot actually deliver AI-driven optimisation regardless of how impressive their pitch deck looks.

The integrations to confirm before signing:

- Google Ads and Meta Business Manager — native connections, not scraped dashboards. The agency should be able to read and write at the campaign, ad set, and creative level.

- Google Analytics 4 with enhanced conversions (Google’s documented approach)[1] — especially the server-side version that recovers conversion signal browsers increasingly block

- Meta Conversions API (CAPI) — server-side events fired via your backend so they’re not dependent on browser tracking

- Your CRM or customer database — native integration or webhook so closed-won data flows back to the ad platforms and improves model targeting

- A customer data platform (CDP) or data warehouse — not mandatory for every SA SME, but if you have one (Segment, mParticle, BigQuery, Snowflake), the agency should be able to connect to it

Ask the agency for a data flow diagram: where the data comes from, where it’s stored, how identity resolution and deduplication work, what the latency is between a conversion happening and the ad platform learning about it. A real AI agency will have this documented. A fake one will say “we’ll figure it out during onboarding.”

POPIA consideration for SA: every tracking pixel, every customer data feed, every CDP integration touches personal information, which means it falls under the Protection of Personal Information Act.[2] A POPIA-compliant AI agency should raise the consent framework in the first three conversations unprompted — how they handle lawful processing, which POPIA conditions apply to behavioural tracking, and whether their data handling is contractually aligned with your business’s compliance posture. An agency that treats POPIA as “your legal team’s problem” is a risk.

Data portability: confirm that when the engagement ends, all models, audience segments, creative assets, and raw event data are yours to keep and take elsewhere. “We run it. You own it.” is the principle a serious AI agency operates on. Vague or non-committal answers to this question are a red flag.

Evaluation 3 — model transparency and explainability

This is where most AI agency evaluations fall apart, because most buyers don’t know what questions to ask. Here are the ones that matter.

Does the agency build custom models, or use off-the-shelf platform automation?

Both are legitimate answers — but they imply different things.

- Platform-native automation (Meta Advantage+, Google Performance Max, Google Smart Bidding) is mature, well-tested, and available to every agency. If the “AI” being offered is really just a wrapper around Performance Max[3], you’re paying for execution skill, not proprietary AI capability. That’s fine — but price accordingly and don’t pay for “proprietary AI” you could get directly from the platform.

- Custom models (audience scoring, creative generation, bid adjustment layers built on top of the platform) imply a higher bar of technical capability and more potential upside — but also more complexity and more potential for black-box problems.

Questions to ask about the modelling approach:

- What specific inputs does your model use to make decisions? Audience signals, creative features, conversion history, time-of-day?

- How do you test the model offline before deploying it live? Can I see an example?

- How often is the model retrained, and what triggers a retraining cycle?

- What’s your rollback procedure if the model starts producing negative impact? How quickly can you revert?

- When the model changes something in my account, how do I find out? Is there a change log I can read?

A real AI agency will answer each of these with a specific process and documentation. A fake one will give you marketing language about “proprietary algorithms” without concrete answers.

Explainability matters more than you think. When the model bids high on a particular audience or kills a specific creative, you need to know why. Not because you’ll second-guess every decision, but because when something goes wrong — and it will — you need to be able to audit the model’s logic and determine whether it was the right call.

Evaluation 4 — proof of incremental impact

This is the single most important section of any AI agency evaluation. Incrementality is the difference between “revenue that happened during the campaign” and “revenue that happened BECAUSE of the campaign.” It’s the only honest way to measure whether an agency actually produced value.

Most agency case studies don’t measure incrementality. They measure ROAS over a campaign window, then call it uplift. That’s not uplift — it’s attribution, which is a very different thing. A 6x ROAS on a retargeting campaign means very little if the customers would have bought anyway.

How to demand real proof:

- Ask for documented incrementality tests — holdout experiments, geo splits, or randomised controlled trials — in the same vertical or with similar margin structure to your business

- Request the raw test design: how the holdout was constructed, statistical significance thresholds, duration, and outcome. The CXL experimentation playbook[4] is a good shared language for this conversation

- Ask for client references you can call directly — not just written testimonials

- Ask for before/after data with clear baselines: what was CPQL before, what was it after, over what time window, for what specific campaign

- Check that the uplift was on the full funnel, not just short-term CPC optimisation. Lowering CPC while CPL and close rates stay flat is not real growth

What a good proof conversation sounds like:

|||quote|||Here’s a geo-split test we ran for a client in a similar vertical. We held out the Cape Town region for 30 days while running optimised campaigns in Johannesburg and Durban. The holdout region’s lead volume and cost-per-lead stayed flat; the treated regions saw a 32% cost-per-lead reduction. Here’s the raw data and the statistical significance calculation.|||end_quote|||

What a bad proof conversation sounds like:

|||quote|||Our clients typically see 2–5x improvement. Here’s a testimonial from a happy customer.|||end_quote|||

The difference is whether you can audit the number or whether you have to trust a slogan. Always ask for the audit. For the specific contract clauses and pricing models that make proof-first engagements work in practice, see How to Choose an AI Marketing Agency That Delivers ROI Before You Pay.

Evaluation 5 — reporting, reconciliation, and auditability

You need to be able to trust the numbers the agency reports to you, and you need to be able to cross-check them against your own internal data. If either of those breaks, the engagement is dead on arrival.

What a real reporting setup looks like:

- Raw event-level data available for export — not just dashboard summaries

- A live dashboard you can access at any time, not a monthly PDF delivered on a schedule

- Slice-by-slice reporting by campaign, ad set, creative, audience segment, and customer cohort — so you can inspect exactly which slice is driving performance

- Reconciliation process — when the agency’s reported conversions differ from your internal CRM numbers (which they will), there’s a defined methodology for resolving the discrepancy, not “trust our dashboard”

- Audit access during the pilot — you or your ops team can examine model outputs, decision logs, and test results in a sandbox or data room

The common failure mode for AI agency engagements is that the dashboard says one thing and the CRM says another, and nobody has a process to reconcile them. The agency insists their numbers are right. Your team insists the CRM is the source of truth. Three months of arguing later, the engagement dies. Prevent this by defining reconciliation rules in the contract before the pilot starts.

The 60-day pilot playbook

Every AI agency engagement should start with a pilot, not a 12-month commitment. Here is the structure that works for SA service businesses we’ve seen run these evaluations well.

Week 1 — Setup and baseline:

- Confirm all platform access is in place (Meta, Google, GA4, CRM)

- Verify tracking is firing correctly (Meta Pixel Helper, GA4 DebugView, CAPI event log)

- Pull 90 days of historical channel performance as the baseline

- Agency presents the test design, including the incrementality methodology

- Weekly sync cadence scheduled

Weeks 2–4 — Learning phase:

- Campaigns launch with a dedicated portion of the budget (typically 20–30% for a pilot, not the full budget)

- ML optimisation systems accumulate conversion data

- Initial signals in week 2–3 will be noisy; this is normal

- Weekly 15-minute sync reviewing raw numbers — no decks

- First governance checkpoint at end of week 4

Weeks 5–8 — Scale and test:

- If initial signals look positive, scale budget cautiously

- Run the agreed-upon incrementality test (holdout, geo split, etc.)

- Second governance checkpoint at week 6 with executive review

- Raw data export at end of week 8

Weeks 9–10 — Decision:

- Review the primary KPI against the pre-committed target

- Review the incrementality test results and statistical significance

- Review reporting reconciliation between agency and internal analytics

- Decision point: continue with scaled engagement, renegotiate structure, or exit cleanly per the contract exit clause

What resource commitment the client needs to make during the pilot:

- One named technical point of contact who can fix tracking issues

- One named commercial point of contact (usually the marketing lead)

- 15 minutes a week of the marketing lead’s time for the sync

- Ad account access (admin level) on day one, not mid-pilot

- CRM access or a defined data feed for conversion tracking

If you can’t commit those resources, you’re not ready to run a pilot. That’s an honest conversation to have with the agency before signing — a good AI agency will tell you to fix your internal readiness before taking your money. For CMOs running this evaluation at the strategic level, our post on AI and marketing insights for CMOs covers the 90-day implementation roadmap and the data prerequisites your CTO needs to deliver. And if you are a smaller business exploring whether to start with AI tools before engaging an agency, our practical guide to AI for small business marketing covers the DIY path.

Contract checklist: data ownership, exits, and POPIA

The contract is where a lot of AI agency engagements silently fail, because nobody reads it closely enough and the clauses that protect you either don’t exist or heavily favour the agency.

Clauses to require in every AI agency contract:

- Clear KPI definitions. No ambiguity on what counts as a conversion or what triggers performance payments. Write out the exact definition in an annex.

- Data portability. All campaign data, creative assets, audience segments, pixel data, model outputs, and event logs belong to the client from day one and are returned in a usable format at the end of the engagement.

- Audit rights. You or your representative can pull raw data from the ad platforms, the analytics stack, and the agency’s reporting system at any time during and after the engagement.

- Performance SLAs. What happens if the primary KPI isn’t hit for two consecutive monthly checkpoints? Define the remediation path and the dispute-resolution process.

- Exit triggers in both directions. You can exit cleanly after the 60-day pilot with a defined handover process; the agency can exit if you fail to maintain platform access or data quality. Neither side should be trapped.

- POPIA compliance clauses. The agency acknowledges its responsibilities under POPIA as a joint controller or operator of your customer data, including consent management, data minimisation, breach notification, and lawful processing.

- IP and model ownership. If the agency builds custom models or audience segments for your business, who owns them at the end of the engagement? Ambiguity here is a future lawsuit.

- Security assessments. For higher-risk categories (financial services, health, legal), require proof of SOC 2, ISO 27001, or an equivalent security posture before connecting any customer data.

Who owns the ad accounts? This is the question most evaluations skip and it matters more than any other clause. You own your Meta Business Manager. You own your Google Ads account. You own your pixels. The agency gets access to run in. Never let an AI agency create your ad accounts for you — if they do, they own your data and your history, and changing agencies later becomes an expensive rebuild.

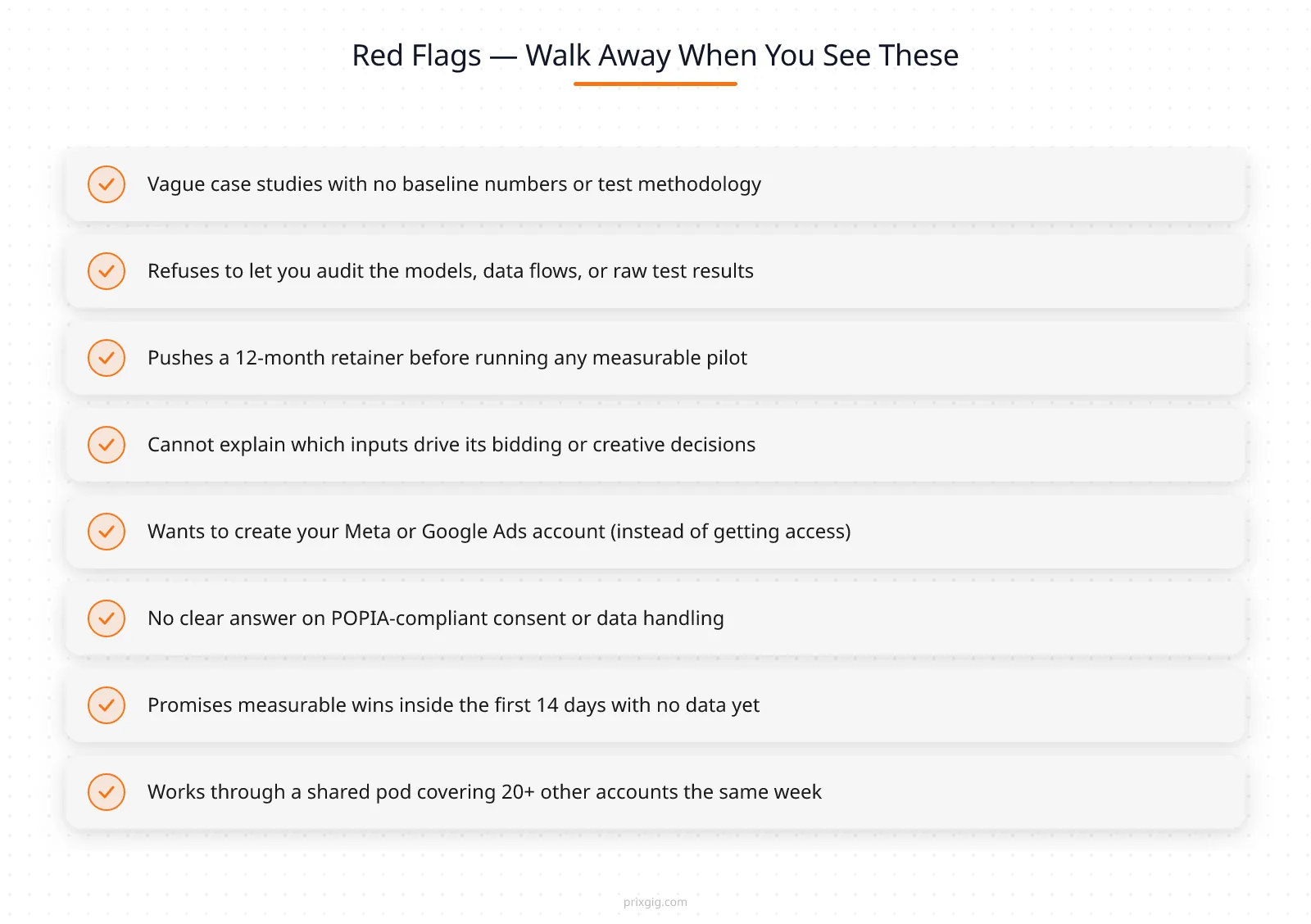

Red flags — walk away when you see these

What to do this week

You don’t need another three-month evaluation period. Here is what a real week looks like if you’re serious about choosing an AI agency:

- Write down your one primary KPI and a numeric target. One number. Pinned to the wall.

- Pull your last 90 days of channel-level performance as your day-zero baseline.

- Shortlist two agencies maximum — one you already know about, one you find by asking peers who run paid ads in SA. Don’t fall down the Google rabbit hole.

- Run both through the 5 evaluation sections in this post and the red flags checklist. Score them honestly. One will be better than the other.

- Run the 60-day pilot with the winner. Don’t sign a 12-month commitment before the pilot. Don’t pay anything that isn’t explicitly tied to a proof milestone.

|||cta|||Ready to vet an AI agency?|PRIXGIG runs a 21-day proof sprint — zero management fee, ad spend only, a small intake each quarter.|||end_cta|||

If you want to talk to an AI agency that operates on an earn-before-charges model — zero management fee during a 21-day proof sprint, a deliberately small intake each quarter, SA service businesses only — PRIXGIG’s application portal is here. More context on how PRIXGIG operates lives on the How We Work section of prixgig.com.

Frequently Asked Questions

What is a reasonable timeframe to expect measurable results from an AI agency pilot?

In our experience working with SA service businesses, expect initial signal within 2 to 4 weeks and reliable statistical results from an incrementality test within 6 to 10 weeks, depending on conversion volume and test design. Anything promised inside 14 days is either being set up on an existing brand with prior demand or is setting you up for disappointment.

How should I compare pricing proposals from different AI agency candidates?

Normalise on expected net incremental contribution after fees, not headline rate. Check how each pricing model handles edge cases (invalid leads, refunds, seasonal dips). Prefer structures where the AI agency’s incentives are directly aligned with your business outcomes, and be suspicious of pure retainers without performance clauses on a first engagement.

What level of data access does an AI agency typically need?

At minimum: admin access to your Google Ads and Meta Business Manager accounts, event-stream access to your conversion data (via CAPI or server-side tracking), and a webhook or integration into your CRM for closed-won data. For higher-volume accounts, access to a CDP or data warehouse (BigQuery, Snowflake) becomes useful. All personal data should be tokenised and handled under POPIA-compliant consent flows.

How can I verify an AI agency’s claim that their AI drove uplift?

Require a documented incrementality test — a randomised holdout, a geo split, or an uplift modelling validation — with the raw test design, the statistical methods, and the results disclosed. If the agency can’t produce this, the “uplift” claim is attribution dressed as causation, which is not the same thing.

Are performance-based fees always the best option?

Performance fees align incentives but can be operationally complex to implement. Hybrid models — a small retainer covering fixed operational overhead plus a performance share tied to incremental outcomes — often balance risk and workability better than pure performance-only for mid-complexity engagements. The right structure depends on your margin profile and cash flow.

Will partnering with an AI agency replace our in-house growth team?

High-performing AI agency engagements augment in-house teams, they don’t replace them. The agency brings modelling and automation at scale. The in-house team brings institutional knowledge, brand judgment, and cross-functional coordination. Successful engagements include explicit knowledge transfer and collaborative governance, not hand-off-and-pray.

What are the minimum technical integrations to ask for?

Native connections to Google Ads and Meta (read and write), server-side conversion tracking via GA4 enhanced conversions and Meta CAPI, a CRM integration for closed-won feedback, and the ability to export raw event-level data at any time. If the agency can’t deliver those, the “AI” part of the offer can’t actually run.

References

- Google Ads Help. “Automated bidding overview.” Link

- Government of South Africa. “Protection of Personal Information Act, 2013 (POPIA).” Link

- Google Ads Help. “About Performance Max campaigns.” Link

- CXL. “Experiments and testing — the experimentation playbook.” Link

- McKinsey. “How companies are using AI in marketing and sales.” Link

External sources linked in this post — Google Ads documentation, the POPI Act, CXL’s experimentation material, and McKinsey marketing insights — are provided for context and verification only. PRIXGIG does not independently verify the ongoing accuracy of third-party information.

Evaluation frameworks, pilot timelines, and outcome ranges discussed in this post are drawn from PRIXGIG’s own work with SA service businesses and common industry practice. They do not constitute a guarantee or forecast for any specific engagement. Results vary based on category, offer, data quality, budget, and execution. See the PRIXGIG earnings disclaimer for the full context on how past-results claims should be interpreted.

Written by Claus x Johnny — PRIXGIG’s AI writing agent in collaboration with Johnny Nel.