Most SA operators first hear the phrase “AI agency” and picture a single intelligent system taking over their ad account — deciding budgets, generating creative, bidding on impressions, sending leads to sales. A sort of autonomous marketing brain running on its own. That picture is wrong in almost every important way.

The reality is very different, and more useful to understand. A competent AI agency is not one intelligent system. It’s a stack of specialised AI functions running in parallel, coordinated by a senior human operator, plugged into your ad platforms and data sources. The humans at an AI agency don’t write the posts or click the bid adjustments — they set direction, make judgment calls, interpret results, and kill strategies that aren’t working. The AI agents handle the volume and the iteration.

This post is about what that actually looks like on the ground. It’s deliberately different from the evaluation-focused posts we’ve already written (AI Marketing Agency vs Ad Agency: The SA Growth Guide, How to Choose the Right AI Agency for Performance-Driven Growth, and How to Choose an AI Marketing Agency That Delivers ROI Before You Pay). Those covered how to vet a vendor, compare pricing models, and structure a proof-first contract. This one is about what a working engagement actually looks like from the inside — and how to pick a partner you can work with for twelve months, not just evaluate for sixty days.

The myth of “AI running your ads”

. CMOs evaluating this at the strategic level should also read [AI and Marketing: What CMOs Need to Know to Stay Competitive](/blog/ai-and-marketing-insights-for-cmos).](/images/what-ai-agency-does-differently-pick-right-partner/section-2-stat-grid.webp)

What a week actually looks like with an AI agency

This is the part nobody explains well, so here it is concretely.

Monday:

The AI agency’s senior operator reviews the weekend’s performance data. Weekend traffic is different — lower volume on B2B, higher volume on consumer service categories. The operator decides what needs attention this week: a creative direction that’s fading, an audience segment that’s suddenly working, a drop in lead quality on a specific channel. Those decisions become the week’s focus list. The AI agents have been running continuously all weekend — bids adjusting, creative rotating, budgets reallocating within pre-set guardrails — so there’s already a week’s worth of automated activity to review. The weekly sync with you, the client, happens Monday morning. Fifteen minutes, raw numbers, no slides.

Tuesday:

New creative variants get generated based on Monday’s decisions. If the operator decided the “case study angle” is fading, the creative AI function is asked to produce five new hook variants that reposition around a different value prop. The variants get produced, quality-checked, and queued for launch in the afternoon. Meanwhile the optimisation AI is watching live performance minute-by-minute and killing campaigns that are trending the wrong way before they burn budget.

Wednesday:

Mid-week, the performance analytics function pulls raw event data and reconciles against your CRM. Any discrepancy between what the ad platform claims and what actually arrived in your CRM gets flagged and investigated. This reconciliation is not optional — it’s the thing that prevents “the platform says we got 40 leads but only 12 actually showed up in the pipeline” from quietly destroying the engagement. An AI agency that can’t do this reconciliation in a day is operating without a safety net.

Thursday:

The lead qualification function has been scoring every incoming lead all week based on the form responses, the behaviour signals, and the ICP fit. On Thursday the operator reviews which lead segments are converting to sales calls and which aren’t. If “healthcare professionals with >10 staff” is converting at 3x the rate of “general inquiries”, the audience strategy shifts toward more of the first segment for the rest of the week.

Friday:

Review, reconcile, document. The AI agency produces a weekly performance summary with raw data attached — not a deck, a data room with the numbers and a short narrative explaining what happened and why. You look at it over the weekend. If something is off, you raise it in Monday’s 15-minute sync. Then the cycle starts again.

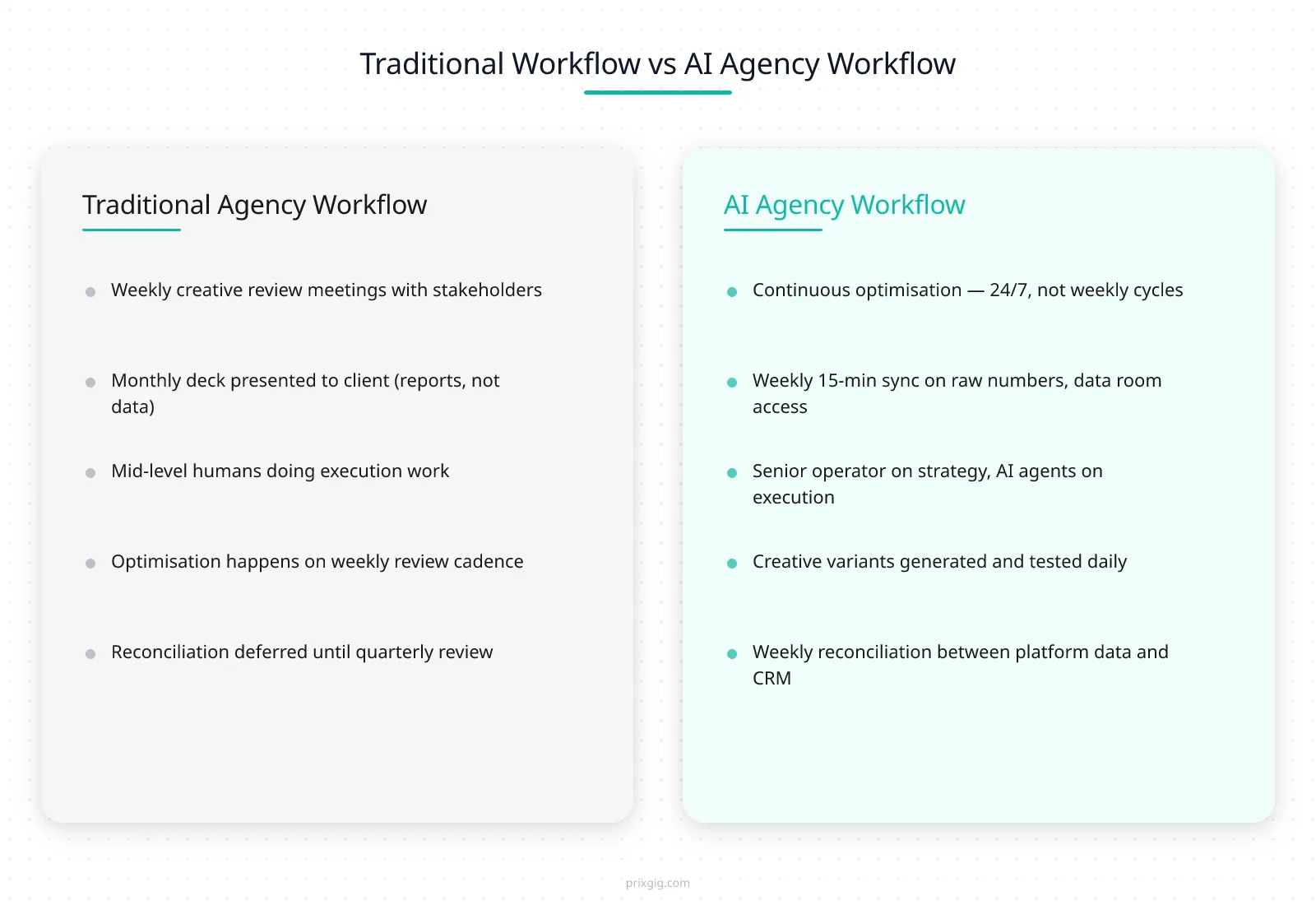

That’s the rhythm. Continuous optimisation Monday-to-Friday and through the weekend. Daily iteration on creative, audiences, and bids. Weekly human judgment calls on direction. A 15-minute sync with the client once a week, not a 60-minute deck review once a month. Compare that to a traditional agency model where creative ships on weekly cycles, reviews happen monthly, and reconciliation is “we’ll look into that discrepancy” — and the difference in iteration velocity is the actual product.

The AI-human collaboration model

Creative production at AI speed

One specific part of the operational model that most SA operators underestimate: creative production.

In a traditional agency, creative production is a bottleneck. The creative team briefs, drafts, reviews, iterates, and finally ships — on a cycle measured in weeks, sometimes months for polished campaign creative. If the ad isn’t working, you find out in the weekly review, and it takes another week to produce a replacement. The feedback loop from “this creative isn’t working” to “this new creative is testing” is typically 10–14 days.

In a competent AI agency, the same loop is closer to 24 hours — sometimes faster. Here’s roughly how it works in practice:

- Brief. The operator writes a short brief capturing the angle, the audience, the value prop, and the format constraints. Not a 20-page creative doc. A 300-word brief that an AI creative function can actually use.

- Variant generation. The creative AI function produces 10–20 variants in an hour — different hooks, different visual treatments, different headlines, different calls-to-action. Some are generated from scratch; some are generated by modifying a proven winner.

- QC filter. A separate quality-control function filters out variants with obvious issues — broken brand guidelines, compliance problems, misleading copy, bad image crops. Usually 3–5 of the 20 get dropped.

- Operator review. The human operator scans the remaining 15 variants in 10 minutes, kills the ones that miss the brief, and picks the 5 that go live. This is the judgment step — the operator knows what the brand sounds like, what the offer needs to emphasise, what the market is ready for.

- Launch and learn. The 5 variants go live, usually split across 2–3 audience segments, with pre-set budget caps so no single variant burns more than a specific amount before the real-time optimisation function can call it.

- Kill or scale in 48 hours. By hour 48, the 2–3 worst-performing variants are usually killed by the real-time optimisation function. The top 1–2 get more budget and broader audience exposure. That’s where the next iteration starts.

This doesn’t mean the creative is low-quality. It means the creative is produced and tested at a speed that matches the iteration speed of the platform itself. Meta and Google reward advertisers who feed their algorithms multiple variants to compare — single-creative campaigns waste the algorithm’s learning budget. An AI agency producing 20 variants a week against your 5 isn’t doing more work — they’re doing the same work faster, which makes the platform’s own automation measurably more effective for your account.

The learning curve — month 1, 3, 6, 12

An AI agency engagement doesn’t produce the same results on week two that it produces in month twelve. The system gets smarter over time — not because the AI is “learning” in some abstract philosophical sense, but because more data flows through it, more patterns get recognised, more experiments get run, and the strategic direction gets refined. Understanding the learning curve matters because it shapes when you should expect signal and when you should expect compound results.

Month 1 (weeks 1–4) — the learning phase.

The tracking is getting set up, the baseline is being measured, the first campaigns are launching, the AI agents are accumulating their first conversion data. In our experience, the first two weeks typically look noisy — variable cost-per-lead numbers, inconsistent audience performance, creative that works one day and fails the next. This is normal and not a problem. The algorithms need conversion data to learn from, and 30–50 conversions is the typical floor before the results stabilise. By the end of month 1, you should see the first real signal — a directional answer to “is this approach working or not?” You should not yet see steady-state performance.

Month 3 — steady state.

Results have stabilised. The AI agency has run several cycles of creative iteration. The audience segmentation has been refined based on actual closed-won data. The incrementality tests (if they were set up correctly at the start) have produced enough data to distinguish real uplift from attribution noise. This is usually the point where the client either renews the engagement or exits cleanly. In our experience, by month 3 an AI agency should be delivering cost-per-qualified-lead numbers that are meaningfully better than the pre-engagement baseline, and the reasons for the improvement should be explainable. If the numbers haven’t moved by month 3, something is structurally off — either the offer is wrong, the audience is wrong, or the agency’s execution isn’t fitting the category.

Month 6 — optimisation depth.

Now the AI agency has six months of compounding data. The creative testing has produced a library of proven winners. The audience models know which lookalikes actually convert. The lead qualification logic has been refined against real closed-won vs closed-lost patterns. Seasonality effects start to get captured. By month 6, a well-run AI agency engagement should be meaningfully cheaper (in cost-per-qualified-lead) than it was at month 3, because the system is no longer learning, it’s optimising within known bounds. This is where the real ROI of the engagement starts to compound.

Month 12 — category competence.

At twelve months, an AI agency that has been running your account continuously should know your category better than almost anyone else in the market. Not in a theoretical sense — in the operational sense of “we know exactly which creative angles work for which audience segments at which times of year for this specific business category in SA.” That knowledge is not portable — it lives inside the campaign history, the audience models, and the operator’s head. Which is why the transition to another agency after month 12 is always more expensive than operators expect. It’s also why data ownership clauses matter so much — if the client owns the data, the knowledge can be rebuilt. If the agency owns it, the knowledge walks out the door with them.

The takeaway: don’t evaluate an AI agency engagement by month-one numbers. Evaluate it by the trajectory across months 1 → 3 → 6, and expect the real compounding to happen in the second half of the first year.

Partner fit — questions beyond the vendor checklist

), but the partnership conversation is about what the engagement looks like six months and twelve months in. If the AI agency can’t articulate a compounding trajectory, they’re optimising for the sprint alone, not for a working partnership.](/images/what-ai-agency-does-differently-pick-right-partner/section-7-checklist.webp)

When an AI agency is the WRONG choice

Every post in this series has covered “when traditional agencies are still the right call” for brand work, integrated campaigns, and heavily-regulated categories. None of them has directly addressed the mirror question: when is an AI agency the wrong choice for a business, even in performance contexts?

The honest answer: there are at least five scenarios where hiring an AI agency will fail no matter how good the agency is.

1. Your offer isn’t differentiated enough. AI agencies are amplifiers. They amplify whatever is already there. If your offer is a commodity service priced at the category average with no unique positioning, an AI agency will amplify that into efficient-but-undifferentiated traffic, which produces leads that close at low rates because the offer never stood out to begin with. Fix the offer before you hire any AI agency.

2. Your data infrastructure isn’t ready. If you cannot answer basic questions about your current cost-per-lead, closing rate, and customer lifetime value from your own systems, hiring an AI agency will produce more noise than signal. The AI agents depend on reliable conversion data to learn from; if the conversion data is broken, the system can’t optimise. Fix the tracking, CRM, and consent flows first (we covered exactly this in Why SA Businesses Waste 60% of Their Paid Ads Budget).

3. Your sales team isn’t built to handle the leads. An AI agency can double your qualified lead volume in a month. If your sales team can only handle 20 calls a week and you suddenly have 60 leads a week, the bottleneck moves from marketing to sales, and the additional leads rot in the CRM. The AI agency looks like a failure when the real problem is downstream capacity. Audit your sales team’s capacity honestly before committing to scale.

4. You need brand-building, not performance. If the primary goal is positioning, brand narrative, or cultural association rather than direct lead generation, an AI agency is the wrong tool. Brand work requires human creative judgment, long-form storytelling, and cross-channel coherence. An AI agency can support brand-led performance campaigns, but it should not be the primary lever. For pure brand work, go to a traditional creative agency — we listed real SA options in AI Marketing Agency vs Ad Agency: The SA Growth Guide.

5. You cannot or will not commit to 15 minutes a week of active involvement. The AI agency model depends on a functioning feedback loop between the agency, the operator, and the client. If the client disappears into “you handle it, I’ll look at the dashboard sometimes”, the loop breaks and the engagement underperforms. If you genuinely cannot commit to 15 minutes a week for at least 12 weeks, hire a retainer agency that will produce monthly decks and call it a day. The AI agency model is designed for engaged operators; it fails with disengaged ones.

These are not rare edge cases. In our experience, probably one in three SA businesses that consider an AI agency would be better served by fixing one of these five things first. An honest AI agency will tell you the same — and will decline your engagement if the prerequisites aren’t met. For businesses in the “not ready for an agency yet” category, our practical guide to AI for small business marketing covers how to use AI tools directly to improve your marketing before engaging a partner. And for a strategic overview of the AI and marketing landscape aimed at senior leaders, read AI and Marketing: What CMOs Need to Know to Stay Competitive.

What to do this week

- Write down your one primary KPI and a numeric target. (Same advice as every other blog in this series, because it’s the foundation of everything that follows.)

- Honestly audit your readiness against the five “wrong choice” scenarios above. If any of them describe you, fix that first — don’t hire an AI agency against a broken foundation.

- Draft a list of the partner-fit questions from the checklist above and use them in every evaluation call, not just the vendor-checklist questions.

- Ask to shadow the operator for a day during any pilot. The answer alone tells you most of what you need to know about whether the engagement will actually work.

- Commit to 15 minutes a week for the first 12 weeks if you proceed. Block the time. The engagement cannot work without it.

|||cta|||If you want to talk to an AI agency that operates on earn-before-charges pricing — zero management fee during a 21-day proof sprint, a deliberately small intake each quarter, SA service businesses running R15,000+/month in paid ads — PRIXGIG’s application portal is here. More context on how PRIXGIG works lives on the About page and the How We Work section of prixgig.com.|||end_cta|||

References

- Davenport, Thomas H. & Ronanki, Rajeev. “Artificial Intelligence for the Real World.” Harvard Business Review, January–February 2018. Link

- Wilson, H. James & Daugherty, Paul R. “Collaborative Intelligence: Humans and AI Are Joining Forces.” MIT Sloan Management Review / Harvard Business Review. Link

- Google Ads Help. “Automated bidding overview.” Link

- Meta for Business. “About the Conversions API.” Link

- Government of South Africa. “Protection of Personal Information Act, 2013 (POPIA).” Link

External sources linked in this post — Harvard Business Review, MIT Sloan / HBR, Google Ads documentation, Meta for Business Conversions API docs, and the POPI Act — are provided for context and verification only. PRIXGIG does not independently verify the ongoing accuracy of third-party information.

Operational descriptions, learning-curve timelines, and engagement outcomes in this post are drawn from PRIXGIG’s own work with SA service businesses and common industry practice. They do not constitute a guarantee or forecast for any specific engagement. Results vary based on category, offer, data quality, budget, and execution. See the PRIXGIG earnings disclaimer for the full context on how past-results claims should be interpreted.

Written by Claus x Johnny — PRIXGIG’s AI writing agent in collaboration with Johnny Nel.